Landmark Classification & Tagging

A computer vision application that identifies global landmarks from user-uploaded images using custom CNN architectures and transfer learning with ResNet18.

Landmark Classification & Tagging for Social Media

📌 Project Overview

In the age of social media, automatically identifying the location of a photo is a valuable feature for tagging and organization. This project focuses on building a robust landmark classifier capable of identifying global landmarks from raw image data.

The project was implemented in two main phases:

- CNN from Scratch: Designing and training a custom Convolutional Neural Network architecture to achieve a baseline accuracy of over 50% on 50 landmark classes.

- Transfer Learning: Leveraging a pre-trained ResNet18 model to significantly boost classification performance through fine-tuning.

Technologies Used: Python, PyTorch, TorchScript, Jupyter Notebooks, Matplotlib, Seaborn.

🚀 Key Features & Implementation

1. Custom CNN Architecture

Developed a multi-block CNN backbone inspired by VGG architectures:

- Convolutional Blocks: Multiple layers with $3 \times 3$ filters and padding to maintain spatial dimensions.

- Batch Normalization: Integrated to accelerate training and improve stability.

- Adaptive Average Pooling: Used to produce a fixed-size embedding vector regardless of input image dimensions.

- MLP Head: A fully connected head with Dropout to prevent overfitting.

2. High-Performance Transfer Learning

Replaced the custom backbone with a pre-trained ResNet18 model:

- Feature Extraction: Froze the early layers of ResNet to preserve generalized visual features learned from ImageNet.

- Classifier Refinement: Redesigned the final linear layer to map to the specific 50 landmark categories.

- Optimization: Achieved a test accuracy of ~73%, a significant improvement over the from-scratch model.

3. Advanced Preprocessing Pipeline

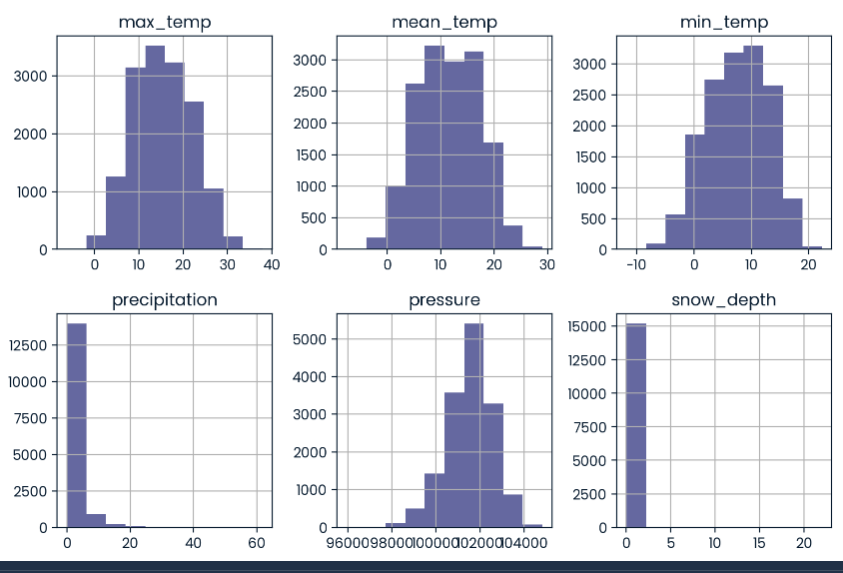

Implemented a robust data augmentation strategy to improve model generalization:

- Training Augmentation: Utilized

RandomResizedCrop,RandomHorizontalFlip,ColorJitter, andRandAugment. - Normalization: Normalized images using the mean and standard deviation of the entire dataset to aid gradient descent.

4. Production Readiness with TorchScript

- Model Export: Exported the trained model using TorchScript for efficient deployment without requiring a Python interpreter.

- Predictor Wrapper: Engineered a

Predictorclass that encapsulates the preprocessing, inference, and post-processing (Softmax) logic into a single serialized artifact.

📊 Evaluation

The model’s performance was analyzed using:

- Confusion Matrix: Visualizing class-wise precision and recall to identify similar-looking landmarks.

- Top-5 Accuracy: Measuring the model’s ability to include the correct landmark within its top 5 predictions.

💡 What I Learned

- Architecture Design Trade-offs: Gained experience in balancing model depth and complexity with training time and resource constraints.

- The Power of Transfer Learning: Empirically verified how pre-trained models can drastically reduce the amount of data and compute needed for specialized tasks.

- Deployment Best Practices: Learned to use TorchScript to bridge the gap between experimentation in Jupyter and production-ready code.