AgricGPT

An agricultural domain-specific language model fine-tuned on 210k+ instruction pairs using QLoRA for efficient expert knowledge adaptation.

AgricGPT : Domain-Specific LLM Fine-Tuning

📌 Project Overview

General-purpose LLMs often lack the specialized, granular knowledge required for niche domains like agriculture. AgricGPT is a specialized language model developed to act as an agricultural expert, bridging this knowledge gap through targeted domain adaptation.

By fine-tuning Microsoft’s Phi-2 (2.7B) model on a massive dataset of agricultural instructions, I created a highly capable assistant that can provide accurate advice on crop management, soil health, and pest control while remaining efficient enough to run on consumer-grade hardware.

Technologies Used: Python, PyTorch, HuggingFace Transformers, PEFT (Parameter-Efficient Fine-Tuning), BitsAndBytes, Weights & Biases (W&B).

🚀 Technical Implementation

1. Model Selection (Phi-2)

Chose Phi-2 (2.7B parameters) for its industry-leading performance-to-size ratio. Its relatively small footprint makes it ideal for specialized applications where high reasoning capability and low inference latency are balanced.

2. Parameter-Efficient Fine-Tuning (PEFT) with QLoRA

Leveraged QLoRA (4-bit Quantization + LoRA) to fine-tune the model. This technique:

- Quantization: Reduced the 16-bit weights to 4-bit, drastically lowering VRAM usage.

- Low-Rank Adapters: Kept the base model weights frozen and only trained small, specialized adapter layers.

- Efficiency: Allowed for the fine-tuning of a 2.7B parameter model on a single 16GB GPU without sacrificing the model’s foundational reasoning abilities.

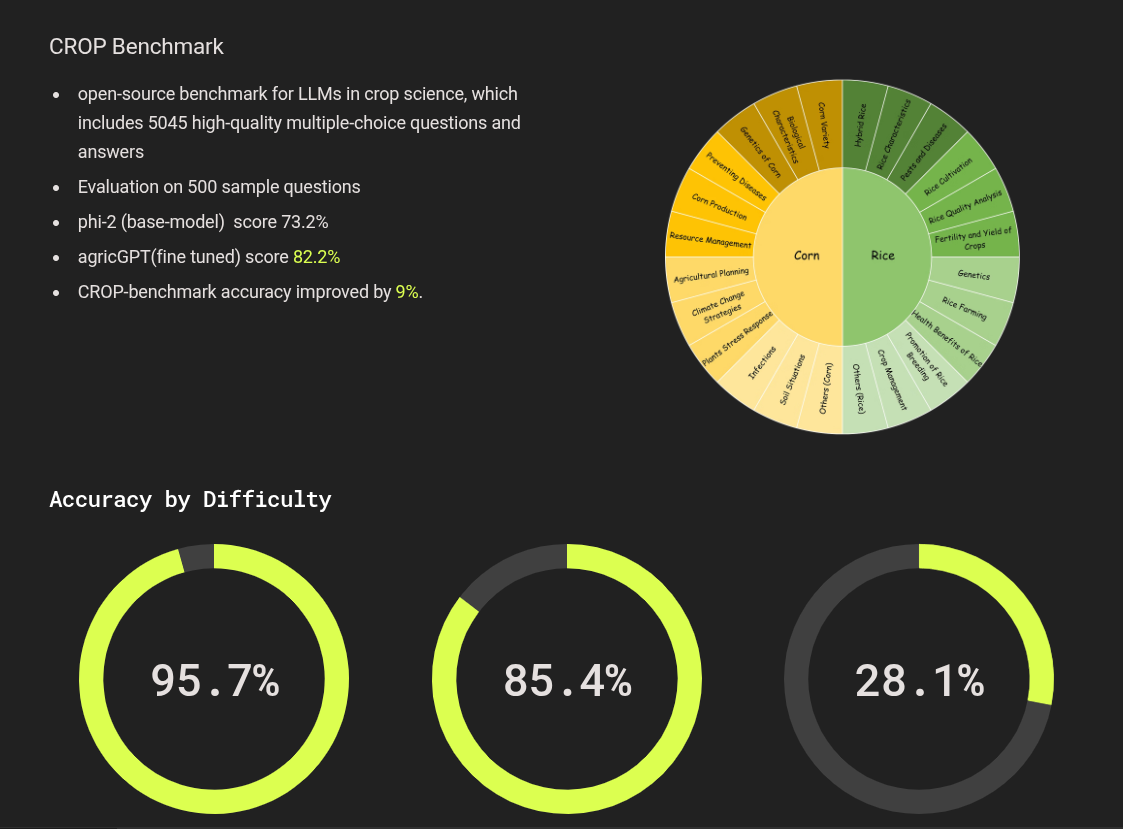

3. Dataset & Training (AI4Agr CROP-dataset)

Fine-tuned on the AI4Agr/CROP-dataset, comprising approximately 210,000 instruction-response pairs across various agricultural domains.

- Preprocessing: Tokenized using the Phi-2 specific tokenizer with custom padding and truncation strategies.

- Monitoring: Integrated with Weights & Biases (W&B) for real-time tracking of training loss and validation metrics.

📊 Evaluation & Model Card

The model has been publicly released on the HuggingFace Hub, complete with detailed documentation and usage instructions.

- Link: AgricGPT-v1-Phi2 on HuggingFace

- Primary Use Case: Intelligent agricultural assistant for crop recommendation and disease diagnosis.

💡 What I Learned

- Mastering PEFT Workflows: Gained practical expertise in implementing QLoRA pipelines for domain-specific tasks.

- The Power of Small Models: Observed how a 2.7B parameter model can outperform much larger models in specialized domains after targeted fine-tuning.

- HuggingFace Ecosystem: Deepened experience with the full HuggingFace stack, from Dataset preparation to Hub deployment.